Optimizing Matrix Multiply

(Due 6/25/2002)

Problem

You will optimize a routine to multiply square matrices. Matrix multiplication is a basic building block in many scientific computations; and since it is an O(n3) algorithm, these codes often spend a lot of their time in matrix multiplication. The most naive code to multiply matrices is short, sweet, simple, and very slow:

for i = 1 to n

for j = 1 to m

for k = 1 to m

C(i,j) = C(i,j) + A(i,k) * B(k,j)

end

end

end

We're providing implementations of the trivial unoptimized matrix multiply code in C and Fortran, and a very simple blocked implementation in C. We're also providing a wrapper for the BLAS (Basic Linear Algebra Subroutines) library. The BLAS is a standard interface for building blocks like matrix multiplication, and many vendors provide optimized versions of the BLAS for their platforms. You may want to compare your routines to codes that others have optimized; if you do, think about the relative difficulty of using someone else's library to writing and optimizing your own routine. Knowing when and how to make use of standard libraries is an important skill in building fast programs!

The unblocked routines we provide do as poorly as you'd expect, and the blocked routine provided doesn't do much better.

Implementation

You need to write a dgemm.c that contains a function with the following C signature:

void

square_dgemm (const unsigned M,

const double *A, const double *B, double *C)

If you would prefer, you can also write a Fortran function

in a file fdgemm.f:

subroutine sdgemm(M, A, B, C)

c

c .. Parameters ..

integer M

double precision A(M,M)

double precision B(M,M)

double precision C(M,M)

Note that the matrices are stored in column-major order.

Also, your program will actually be doing a multiply and add operation:

C := A*B + C

Look at the code in basic_dgemm.c if you

find this confusing.

The necessary files are in tuning-matmul.tar. Included are the following:

- Makefile

- a sample Makefile, with some useful compiler options,

- basic_dgemm.c

- a very simple square_dgemm implementation,

- blocked_dgemm.c

- a slightly more complex square_dgemm implementation

- basic_fdgemm.f

- a very simple Fortran square_dgemm implementation,

- f2c_dgemm.c

- a wrapper that lets the C driver program call the Fortran implementation,

- wrap_dgemm.f

- another wrapper that lets the C driver program call the dgemm routine in BLAS implementations,

- matmul.c

- the driver program, and

- timing.gnuplot

- a sample gnuplot script to display the results.

You have your choice of platforms on which to do your development and testing:

-

800 MHz Intel Itanium, Linux (mini.cs)

Intel C and Fortran compilers (ecc and efc) -

733 MHz Intel Itanium, HP-UX (yugo.cs)

HP C and Fortran compilers (/opt/ansic/bin/cc and /opt/fortran90/bin/f90) -

1.5 GHz Pentium 4, Linux (saab.cs)

Intel C and Fortran compilers (icc and ifc) -

333 MHz Sun Ultra2i, SunOS (infiniti.cs, vw.cs, maruti.cs, redwood.cs, aquarium.cs)

Sun C and Fortran compilers (cc and f77/f90) -

500 MHz Intel Pentium III, Linux (si.cs)

Intel C and Fortran compilers (icc and ifc)

The y-axis in the gnuplot script plots is labeled MFlop/s, but really it should be "MFlop/s assuming you were using the standard algorithm." If you use something like Strassen's, we will still measure time/(2n3).

You will get a lot of improvement from tiling, but you may want to play with other optimizations, too. Strassen-like algorithms, copy optimizations, recursive data layout, ... you can get some pretty elaborate optimization strategies. We'll cover some of the possibilities in discussion, but you might want to do some independent reading, too.

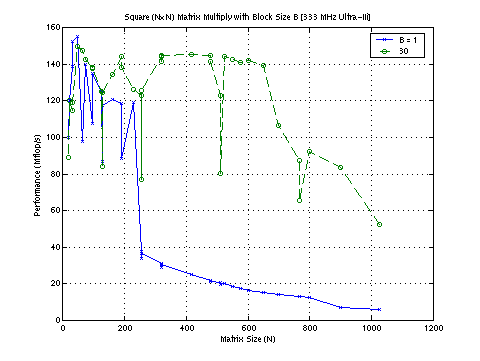

Here is one particularly good example done last year. This handily beat the vendor implementation on square matrix sizes (by 5-10% on large sizes) on a Sun Ultra I/170 workstation.

Submission

Your group needs to submit your group's dgemm.c, Makefile (for compiler options) and a write-up. Your write-up should contain

- the names of the people in your group,

- the optimizations used or attempted,

- the results of those optimizations,

- the reason for any odd behavior (e.g., dips) in performance, and

- how the same kernel performs on a different hardware platform.

To show the results of your optimizations, include a graph comparing your dgemm.c with the included basic_dgemm.c. For the last requirement, try your tuned dgemm.c on another hardware platform (like the NoW machines or Millennium or your home machine) and explain why it performs poorly. Your explanations should rely heavily on knowledge of the memory hierarchy. (Benchmark graphs help.)

Resources

- You will find the notes that accompany this assignment in PowerPoint or HTML.

- I've collected a bunch of processor manuals for you here. There might be a compiler manual or two there as well.

- The makefile stubs here include some interesting compilers and compiler options which you can use as a starting point (warning: these might not be up to date). You are encouraged to try any others.

- We've collected the associated files in a page, and the required matrix multiplication files are packages in a tar file.

- Thanks to Norm Zhou for a great page on IA-32 implementations (including the one we're using).

- Need information on gcc compiler flags? Read the manual. Want to do inline assembly in gcc? Here's a starting point. Be careful setting down the road to inline assembly, though. The compiler will usually be better than you about scheduling individual ops, if you give it careful enough instructions.

- The manuals for the Pentium III processor are available online. You'll probably actually want to look at the one labeled "Pentium 4 Processor Optimization".

- To get more information on the performance of the memory hierarchy on your machine, use a previous class's membench program. Another useful benchmark is available as lat_mem_rd benchmark in lmbench. For more information on interpreting the results of the memory benchmark membench, see the following paper (.ps) by Krishnamurthy, Yelick, et al.

- The optimizations used in PHiPAC and ATLAS may be interesting. Note: You cannot use PHiPAC or ATLAS to generate your matrix multiplication kernels. You can write your own code generator, however. You might want to skim the tech report (.ps.gz) on PHiPAC. The section on C coding guidelines will be particuarly relevant.

- There is a classic paper (.ps) on the idea of cache blocking in the context of matrix multiply by Monica Lam, et al.

- Several folks have tried to automatically get cache locality by basing their matrix storage organization around space-filling curves. There is a fun paper by Chatterjee on the topic that I found while looking for the classic paper by Gustavson, "Recursion leads to automatic variable blocking for dense linear algebra." (Thanks to Donald Chai for the pointer to the online version of Gustavson's paper).

- If you are not familiar with Citeseer, now is a good time to learn about it. If you follow the cross-references in the link to the page for Chatterjee's paper above, you'll run into lots of interesting idea.

- Previous years have worked on variations of this assignment. Check out CS 267 Spring 2001, Spring 1999, Spring 1998 (?), and Spring 1996. The last one has an interesting twist; some students beat IBM's own matrix multiplication routines.

- It never hurts to review material on caches and superscalar processors from CS 61C (there is a link to Brian Harvey's video-taped lectures), or the first lecture by Dave Patterson in CS 252 (Spring 2001). Introductory slides (PowerPoint or PDF) from a talk on this stuff this summer. You might also be interested in some of the other readings on the BeBOp page.